Last November, the BANYAN release of the Kairos products provided significant improvements in multiple parts of the products. The software teams delivered on time and have also prepared the architecture to support future products and functionality.

Improved reasoning

Common to all products is the new way to execute the reasoning. In short the reasoning engine application makes the logical connections between root causes, sensor values and consequences based on the Multi Level Flow Model. The new version (Reasoning 2.0) has improved the engine from being based on deviations from normal to also include sensors without deviation as a part of the evidence. This made a significant improvement to the accuracy of the analysis. Claus Myllerup (CTO) says: "The pattern of sensors used to recognize a root causes is now much more complete. We can say that our previous approach was similar to the DCS giving alarms and partly leaving the reasoning about the combination of multiple alarms (and sensors in normal state) to the operator. Today we can present a complete analysis where the operator will see all sensors on the propagation path from a root cause to a consequence being used as evidence".

Counteraction

The ability to detect root causes and predict consequences is key to establish situational awareness as a basis for making good decisions in difficult situations. Our Control Room Assistant (CRA) will also give advice on how to deal with the situations. As a part of our development we understood that the first step of taking action often involves verifying the root cause, then correct the cause or prevent the potential consequences if it´s urgent.

Verify

Different scenarios may look similar in the alarm system and the experienced control room operator often consults with the field operator to confirm root causes. This work process has been captured in the verify step. When selecting a root cause, our CRA suggests verification steps and accepts user input as part of the reasoning. This could be any observation that is not available in the control room. It is important to know that it is only about 30% of the instrumentation that is connected to the DCS are available to the operator.

Correct

The correct part of counteraction is used to give clear advice on how to permanently correct the root cause. This will most likely be a maintenance activity and based on operational or maintenance procedure. Also note that experience from the operation teams may be captured and enable operational learning on the model. If correct is an activity requiring a maintenance work order and will take time, the prevent step will be useful.

Prevent

The prevent step is used to give the control room operator help on preventing the root cause of propagating further into consequences. These are the immediate steps to take if correct is not a feasible option. The prevent step uses the results of the reasoning to find next actuator in the propagation path from the root cause to prevent the consequences.

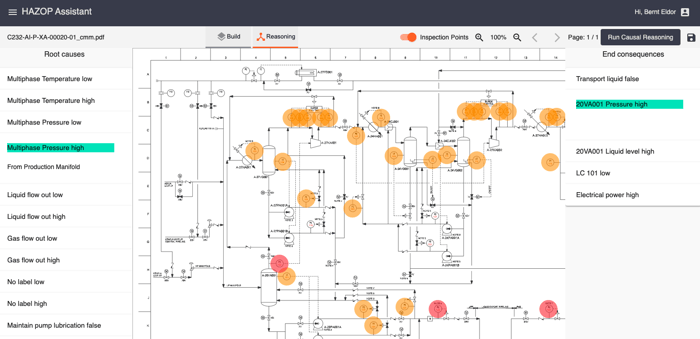

HAZOP Assistant

HAZOP Assistant User Interface

The first MVP (Minimum Viable Product) of the HAZOP assistant was released as part of BANYAN. This has been a Joint Industry Project together with a major E&P company, Vysus, Kairos and supported by Innovation Norway. One of the outputs from this project is the first release of the HAZOP assistant together with a list of development areas for the next release which will be the first commercial release. The following basic functionality is in place.

P&ID View

The HAZOP assistant P&ID view displays Process Hazards (Consequences) and Initiating Events (Root causes) as an overlay to the P&ID diagrams. The sensors, actuators and safeguards are highlighted on the P&ID. This provides a graphical understanding that provides more insight and improved quality in the HAZOP meeting.

HAZOP tabular view

The HAZOP tabular view provides an excel type view of the HAZOP report. This view may be used for sorting and filtering the results and possibly focus the HAZOP meeting to the difficult scenarios and leave som of the obvious to a pre HAZOP meeting activity.

Sensor Analysis

Our toolbox of sensor analysis has been given added functionality to provide a map of the root causes where the detection is ambiguous. I.e. root causes where the instrumented sensors are not able distinct the different root causes. In these cases a verification step may be required. This tools can also be valuable when designing plants or setting up machine learning. If there is not enough information, this is the same for our reasoning, human beings and any other algorithm such as Machine Learning.