Failure propagation (and the potential severity we are facing) in processing systems is a key factor to consider when assessing the reliability and stability of any system. It can occur due to a variety of causes, such as hardware or software malfunctions, environmental factors, or user errors. The effects of failure propagation can be devastating if left unchecked, resulting in data loss, system downtime, and other costly consequences. Therefore, it's necessary to understand how failure propagates through systems so that risks can be detected and mitigated, before they become critical issues.

Effective Methods for Detecting Failures in Processing Plants

In processing systems, it is crucial to detect any potential failure before it has a chance to propagate. By understanding the causes of failure and how it typically propagates, organizations can develop proactive measures to minimize risks and prevent catastrophic consequences. There are a variety of methods for detecting failures in processing plants, including monitoring system logs, performing preventive maintenance, and utilizing automated detection tools.

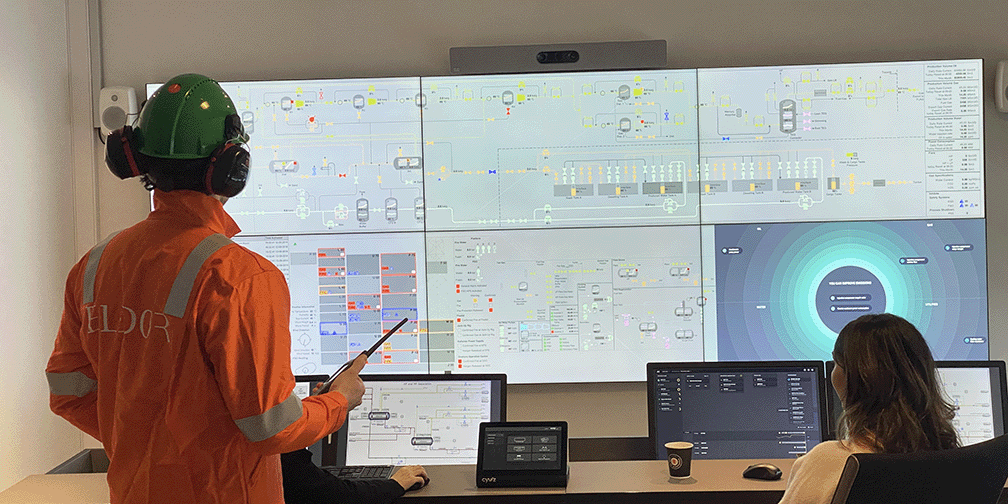

Monitoring system logs is one of the most effective ways to detect suspicious activity or potential malfunctions in processing systems. Process control systems provide valuable insights into the health and performance of the processing system and alert personnel if any irregularities are detected. This can include monitoring system parameters such as temperature, pressure, or vibration levels. Additionally, it is important to execute regular system back-ups and reviews of security policies. These practices can help identify potential threats and vulnerabilities before they become critical.

Performing preventive maintenance is another key factor in ensuring the reliability and stability of processing systems. Regular inspection of equipment and checking for signs of wear and tear can help identify potential problems before they become serious issues. Scheduling periodic software updates or creating a rigorous testing procedure for new components can also help mitigate any bugs that could cause failure propagation.

Automated detection tools are also becoming increasingly popular for quickly identifying errors or suspicious behavior in processing systems. These tools leverage artificial intelligence (AI) algorithms to analyze large volumes of data at high speeds, enabling them to alert personnel immediately when any anomalies are detected. Automated tools can often be configured with customizable thresholds so that only events above a certain threshold level will trigger an alert, filtering out false-positive readings caused by natural fluctuations in system activity.

Best Practices to Reduce Risk from Failure Propagation

The best way to reduce the risk of failure propagation in processing systems is to take proactive measures and address any potential issues before they become critical. By implementing a comprehensive set of practices, organizations can reduce the likelihood of failure propagation and ensure their systems remain reliable and stable over time.

Regular Maintenance: Performing regular preventive maintenance is an essential part of reducing the risk of equipment- and system-failure. This enables personnel to take corrective measures before the issue becomes too serious. Additionally, it is also important to monitor system logs and review security policies regularly to quickly detect any suspicious activity or irregularities in system performance.

Automated detection tools can provide valuable insight into potential problems before they occur. Digital twins can be a valuable tool for predicting risks and preventing end consequences from occurring in the first place. Additionally, these systems can alert stakeholders in an organization to potential issues so that appropriate actions can be taken swiftly, further reducing the chances of negative harmful outcomes.

Improve System Design: Organizations need to plan thoroughly when developing their processing systems to ensure a well-designed system that will be more resilient against failures. This includes incorporating redundancies, such as backup power sources or extra switches that can kick into action if the primary components fail. It also involves ensuring the use of appropriate equipment, specifically that the equipment parts are designed for high reliability. This minimizes the impact to wear over time and provides additional protection against environmental factors, such as temperature changes or dust accumulation.

Train Personnel: To maximize the success rate of mitigation efforts, operations personnel need to understand how failure propagation works and the potential risks that are associated with their systems. Organizations should develop formal training programs for their personnel so that they are aware of how failures typically develop throughout their systems and how best practices can help prevent issues from occurring. Having personnel educated about potential risks will allow them to recognize problems faster and respond more effectively than if they had no prior knowledge or training on the potential issues.

Many organizations can significantly reduce the likelihood of experiencing catastrophic consequences due to failure propagation in their processing systems. Implementing these best practices will help ensure that the system remains more reliable and stable over time, while simultaneously decreasing their costs associated with downtime or data loss resulting from unexpected malfunctions or user errors down the line.

Summary

Today's complex processing plants have thousands of equipment, instruments, and parts; hence it is inevitable that it will break or fail sometime. Even though our preventive maintenance programs are addressing (and in most cases avoiding) the major risks and breakdowns, the need for understanding the causes and related propagation of a fault is imperative to avoid potential severe consequences. By implementing automated detection and analysis tools (digital twins) along with new best practices, organizations can protect their systems against catastrophic consequences, while simultaneously reducing costs associated with downtime or data loss.